Table of contents

- How to Understand OpenClaw

- The Foundation: Model Inference Services

- Conversational Memory: The Memory Mechanism

- External Knowledge: RAG (Retrieval-Augmented Generation)

- Tool Calling: The MCP Protocol

- Workflow Planning: Skills

- The Full Agent: AI Agent

- OpenClaw: When the Agent Really Takes Over Your Computer

- The Story Behind OpenClaw's Viral Rise

- Peter Steinberger

- The Triple Rebrand

- Growth Timeline

- Why OpenClaw?

How to Understand OpenClaw

In this chapter you'll learn how concepts like inference services, Memory, RAG, MCP, Skills, and Agents relate to OpenClaw.

OpenClaw is an open-source, self-hostable personal AI Agent platform.

It runs on your own machine (laptop or VPS), and connects to the chat channels you already use (WhatsApp, Telegram, Feishu, and 22+ other platforms). It doesn't just chat — it executes tasks: read and write files, handle email, run code, drive the browser, and orchestrate workflows.

In one sentence: an Agent runtime plus gateway that sits between your messaging apps and your tool chain, online 24/7.

Let's understand the tool through the foundational concepts at the Agent level.

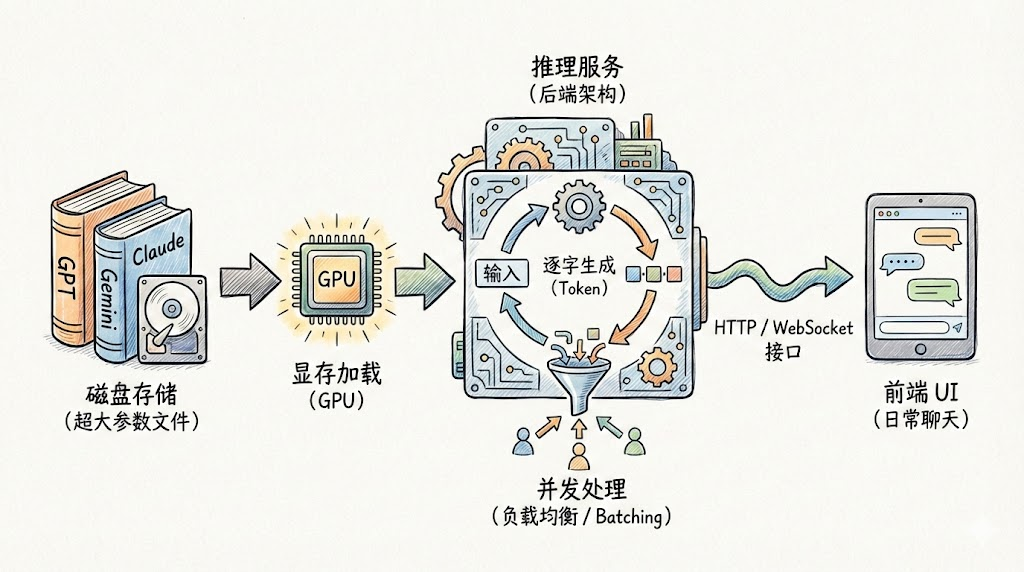

The Foundation: Model Inference Services

Large models like GPT and DeepSeek are essentially enormous parameter files stored on disk (often tens or even hundreds of gigabytes).

To put a large model to work, you need a specialized backend that loads it into GPU memory and exposes an HTTP or WebSocket interface.

That service — the one that receives user requests, runs the matrix math, and generates replies token by token — is the inference service.

Conversational Memory: The Memory Mechanism

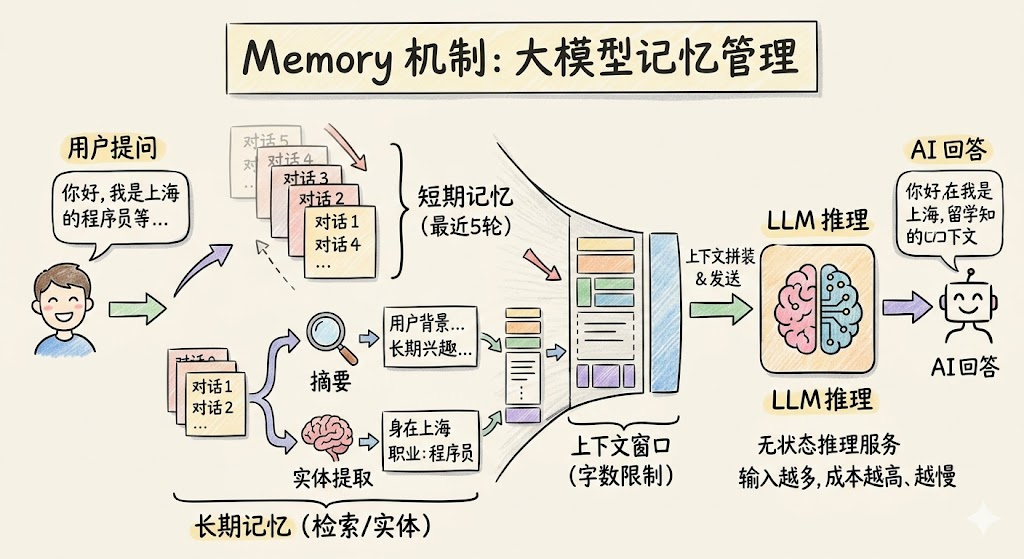

The inference service itself is a stateless HTTP service. Once a request finishes it retains no data, and different requests may even be handled by different instances.

Every model call has a token limit (the context window), and more input means higher cost and slower responses. So you can't just dump the entire history into every call — memory has to be layered and managed on demand:

- Short-term memory: keeps the last few turns verbatim to preserve conversational coherence.

- Long-term memory: for older conversations, a background model compresses lengthy histories into brief summaries, or extracts structured entity facts (e.g. "user is a programmer based in Shanghai") and stores them in a database.

Every time the user asks a new question, the system first retrieves and assembles the relevant memory fragments and sends them to the model along with the current question.

This mechanism — managing conversational context so that AI appears to have a memory — is what we call Memory.

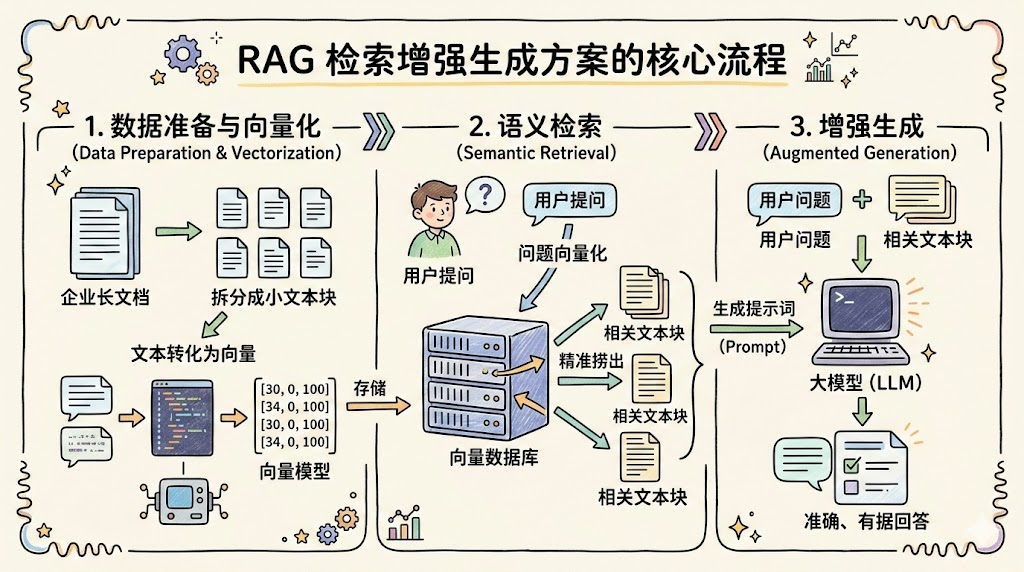

External Knowledge: RAG (Retrieval-Augmented Generation)

A large model's knowledge is bounded by its training data. Once training finishes, that knowledge is frozen — it can't answer real-time news questions or look up your company's internal documents, and it will happily hallucinate.

RAG is the most mature approach to solving this today. The core idea: look things up first, then answer.

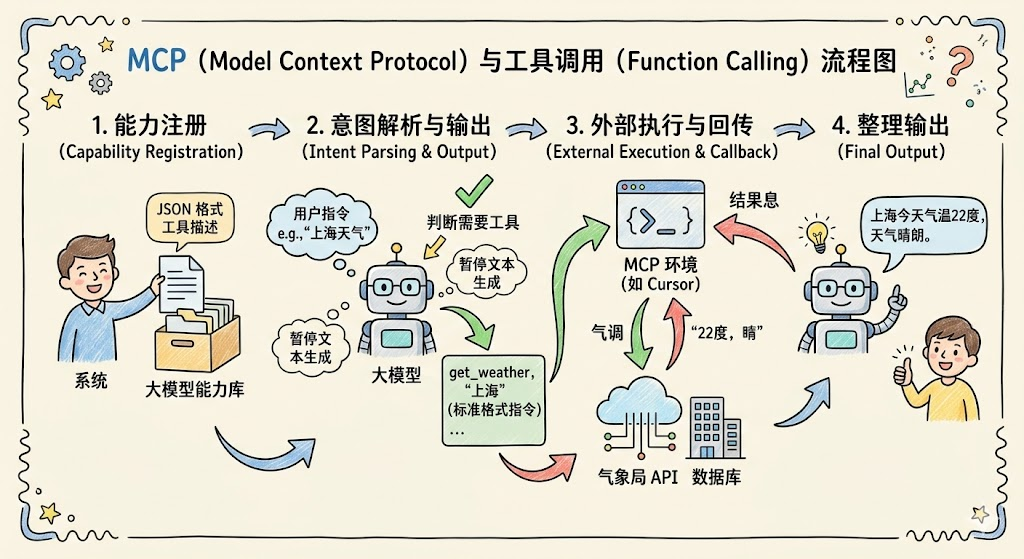

Tool Calling: The MCP Protocol

With Memory and RAG, the model has internal memory and external knowledge, but it's still a closed text-processing system running on a server. It can't actually fetch the weather, read and write local files, or query a database.

To break out of that cage, the industry developed tool-calling capabilities — and MCP is the standardized, open-source protocol spec for how models talk to external tools.

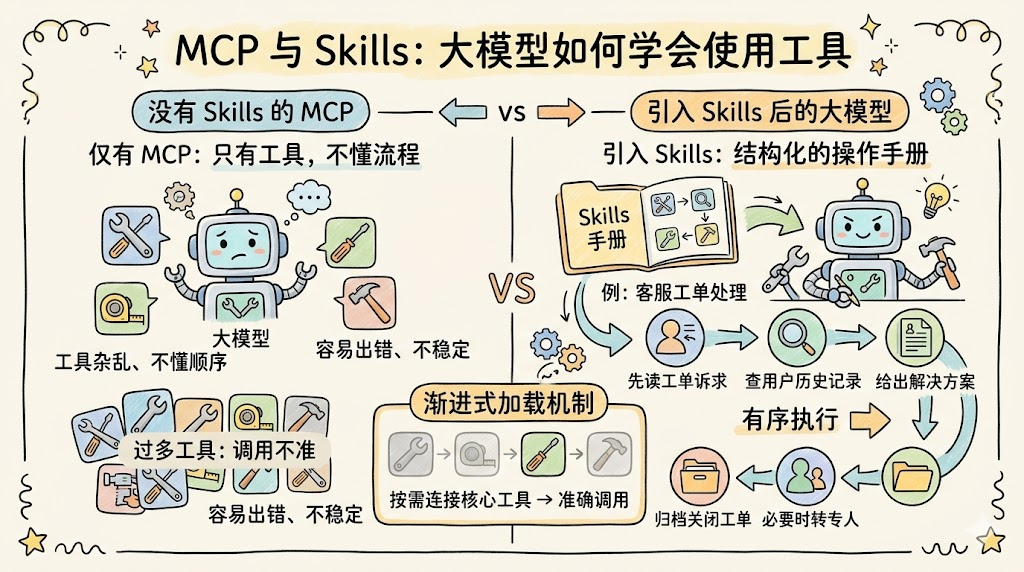

Workflow Planning: Skills

MCP answers "can I call a tool," but the model still doesn't know when to use which tool, in what order, or how to combine them — it has the tools but doesn't know the repair procedure.

Skills are structured runbooks / execution procedures:

They spell out, for a specific scenario, the order in which tools should be used, the execution logic, and any caveats.

Example: in a customer-support ticket scenario, MCP provides tools to fetch user profiles, look up business records, send canned replies, escalate to a human, or close the ticket — while the Skill defines the full flow: read ticket intent → look up user history → propose a resolution → escalate if needed → archive and close.

Skills also solve a practical problem: with too many tools connected at once, tool calls become unreliable. Skills' progressive loading mechanism keeps only the relevant tools in context.

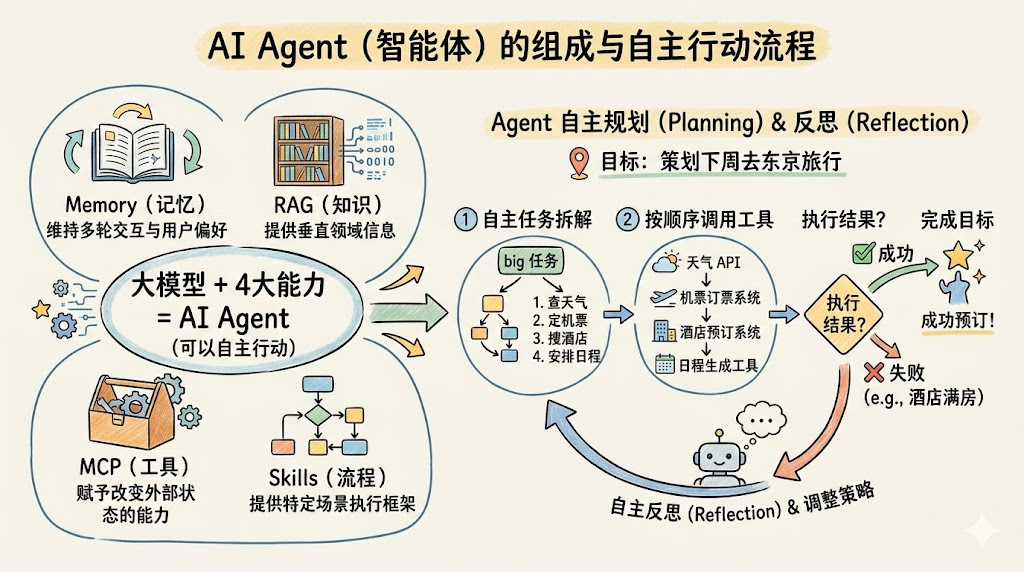

The Full Agent: AI Agent

When a large model has all of the following capabilities, it becomes a system that can act autonomously and complete goals — an AI Agent:

- Memory: maintains state and user preferences across multiple turns.

- RAG: provides the domain knowledge needed to carry out the task.

- MCP: gives the system the ability to change external state.

- Skills: provides an execution framework for specific scenarios.

OpenClaw: When the Agent Really Takes Over Your Computer

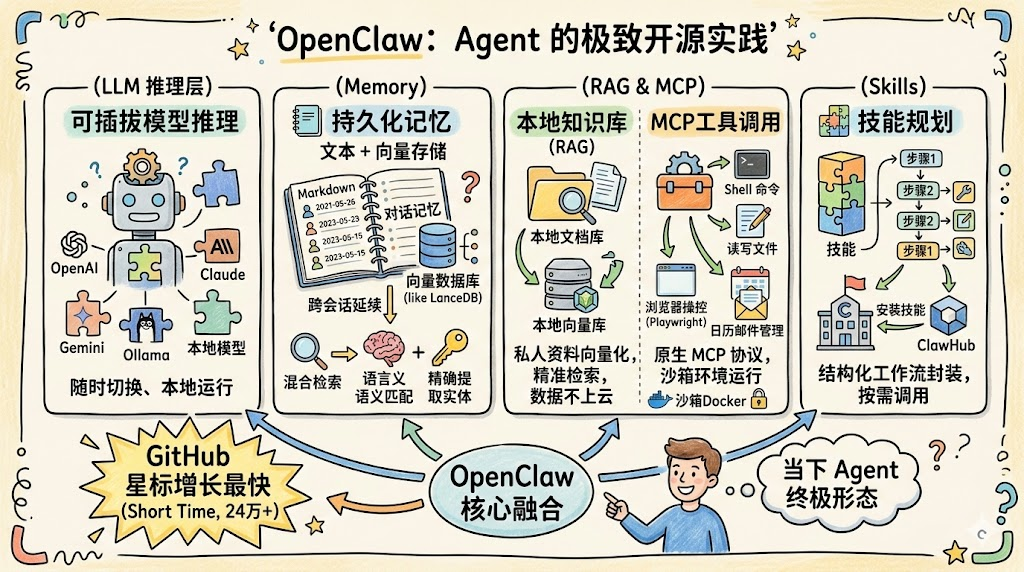

If everything above is the theory of Agents, OpenClaw is the most extreme, most ambitious open-source engineering realization of that theory available today.

It weaves together Memory, RAG, MCP, and Skills into what feels like the ultimate form of a modern AI Agent:

- OpenClaw's underlying inference service is fully pluggable — you can switch between OpenAI, Anthropic, and local models at any time.

- OpenClaw ships with a persistent memory system, using files and a vector database to store long-term memory. It uses a hybrid vector-plus-keyword retrieval strategy: semantic matching can recall old conversations while entity extraction pulls out precise facts, and memory persists across sessions and projects.

- OpenClaw can directly index your local folders and document libraries, vectorizing private material into a local vector store.

- OpenClaw natively integrates the MCP protocol, turning your computer into a toolkit that the AI can operate.

- OpenClaw encapsulates complex workflows into reusable Skills — write your own or install them from the community (ClawHub).

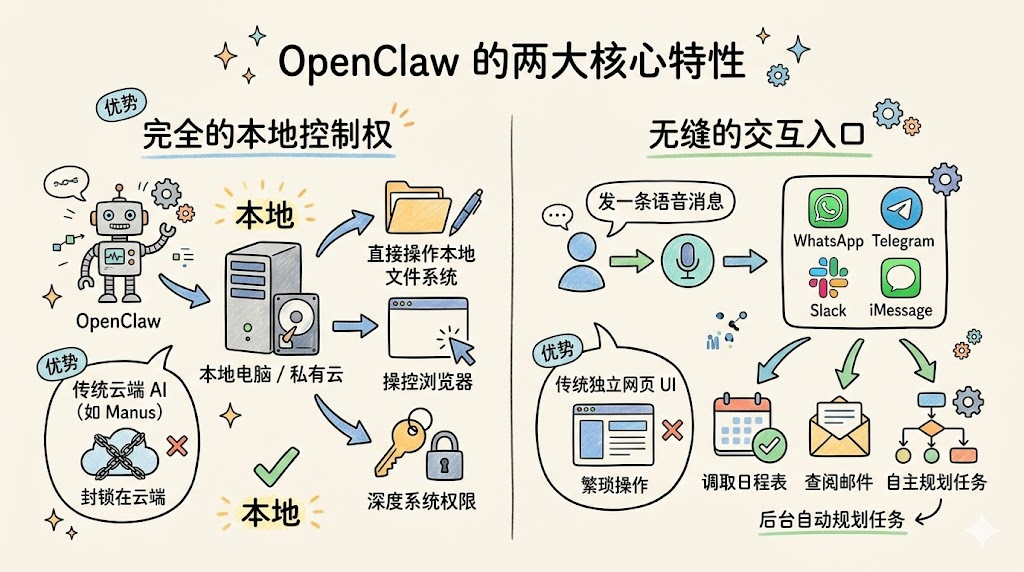

It also has two other defining characteristics:

- Full local control: unlike cloud-locked AI products (such as Manus), OpenClaw is an open-source framework that runs on your own computer or private cloud. That means it can directly operate your local file system, browser, and even deep system permissions.

- Seamless entry points: it skips the standalone web UI and embeds straight into WhatsApp, Telegram, or Feishu — apps you already use daily. Send one voice note and it will check your calendar, scan your email, and plan the task in the background.

The Story Behind OpenClaw's Viral Rise

In this chapter you'll walk through how OpenClaw became a phenomenon.

Peter Steinberger

Peter Steinberger is an Austrian developer. He previously founded PSPDFKit, a PDF toolkit used by nearly a billion users, which he sold in 2023 for around €100 million.

One day in November 2025, frustrated that "a tool like this didn't already exist," he spent an hour building the first OpenClaw prototype.

The Triple Rebrand

This project went through what's arguably the most dramatic naming story in AI history:

|Phase|Name|Dates|Reason| |---|---|---|---| |Initial|Clawdbot|2025.11 – 2026.1.27|The earliest project name| |Middle|Moltbot|2026.1.27 – 2026.1.30|Anthropic sent a trademark notice ("Clawdbot" contains a "Claude" sound). The community voted to rename it at 5 a.m. on Discord| |Final|OpenClaw|2026.1.30 – present|Emphasizes "open source" and "local-first"; trademark research and domain purchases completed|

The community affectionately calls it "The Fastest Triple Rebrand in AI History."

Growth Timeline

| Date | Milestone |

|---|---|

| November 2025 | Prototype launched |

| Late January 2026 | GitHub goes viral |

| Early February 2026 | 190K Stars in two weeks |

| February 15, 2026 | Steinberger announces he's joining OpenAI |

| March 3, 2026 | Breaks 250K Stars (it took React ten years to hit that number) |

| March 10, 2026 | 297K Stars, 56K Forks, 1000+ contributors |

Why OpenClaw?

Precisely hit the market's pain point:

Before OpenClaw, almost every AI product was closed and cloud-hosted. Users worried about privacy and complained the capabilities weren't enough — most AI assistants could only chat, not actually do anything.

OpenClaw's three-in-one combination of open source, self-hosting, and real action precisely answered what the market wanted most.

The tipping point for the Agent concept:

After a few years of market education, AI Agents were no longer a foreign concept.

OpenClaw provided the first concrete, touchable, deployable implementation — it pulled an abstract concept into reality.

Community-driven viral spread:

From Discord to Reddit, from Hacker News to Bilibili, OpenClaw's lobster mascot Molty, the "The claw is the law" catchphrase, and various Agent mishaps created a highly contagious meme culture.